Twitter API / Tutorials

Getting historical Tweets using the v2 full-archive search endpoint

Relevant Endpoints

Introduction

The Search Tweets endpoints in the v2 world enable you to receive Tweets related to topics of interest, based on a search query that you produce. We have two different endpoints available with v2 Search Tweets: recent search, which is available to all developers with an approved account and can search for Tweets up to seven days old, and full-archive search, which is only available to researchers approved for the Academic Research product track, and can search through the entire archive of Tweets dating back to March 2006.

You can see our full search offering on our search overview page. If you are using the enterprise search API, consider reading through our learning path on building rules instead.

These Search Tweets endpoints address one of the most common use cases for academic researchers, who might use this for longitudinal studies, or analyzing a past topic or event.

This tutorial provides a step-by-step guide for researchers who wish to use the full-archive search endpoint to search the complete history of public Twitter data. It will also demonstrate the different ways to build a dataset, such as by retrieving geo-tagged Tweets, and how to page through the available Tweets for a query.

Prerequisites

Currently, this endpoint is only available as part of the Academic Research product track. In order to use this endpoint, you must apply for access. Learn more about the application and requirements for this track.

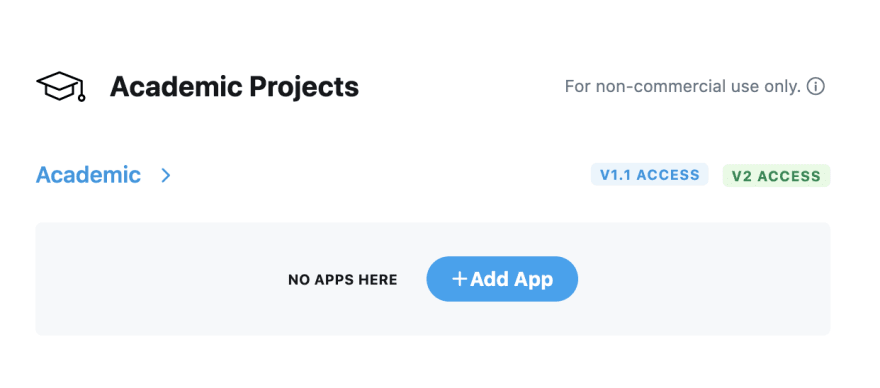

Connect an app to the academic project

Once you are approved to use the Academic Research product track, you will see your Academic Project in the developer portal. From the "Projects and Apps" section, click on "Add App" to connect your Twitter App to the Project.

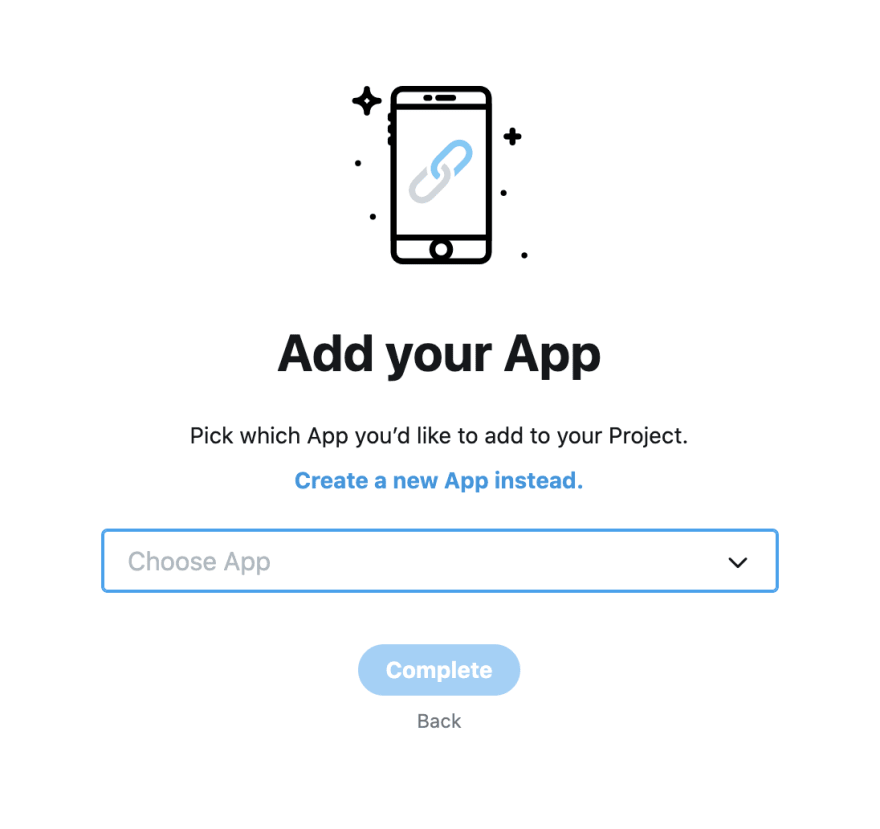

Then, you can either choose an existing App and connect it to your project (as shown below).

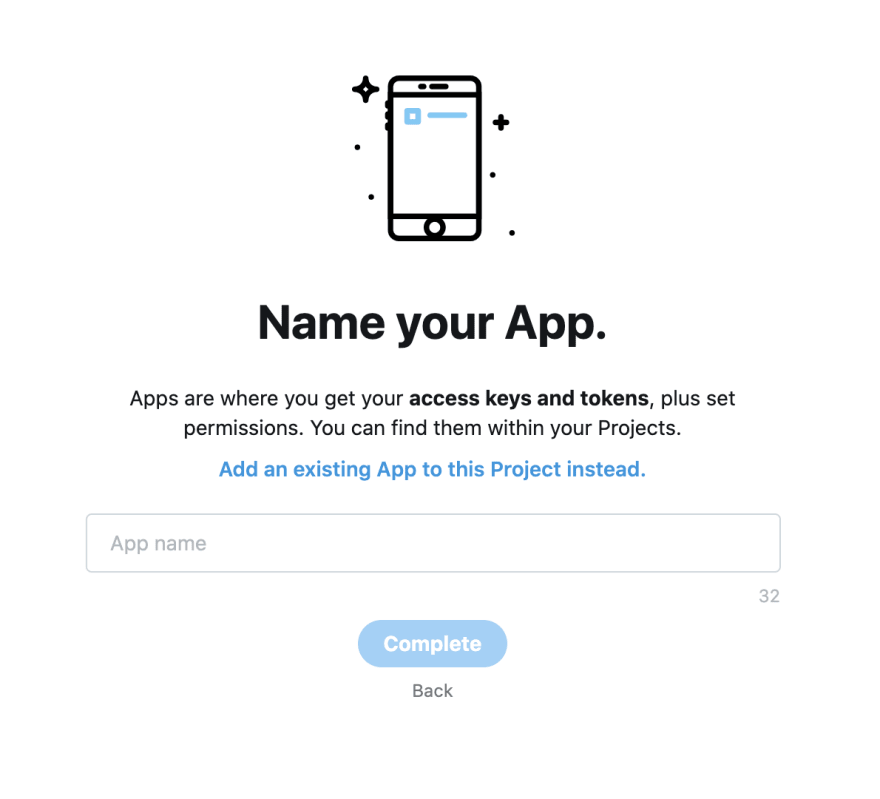

Or you can create a new App, give it a name and click complete, to connect a new App to your Academic Project.

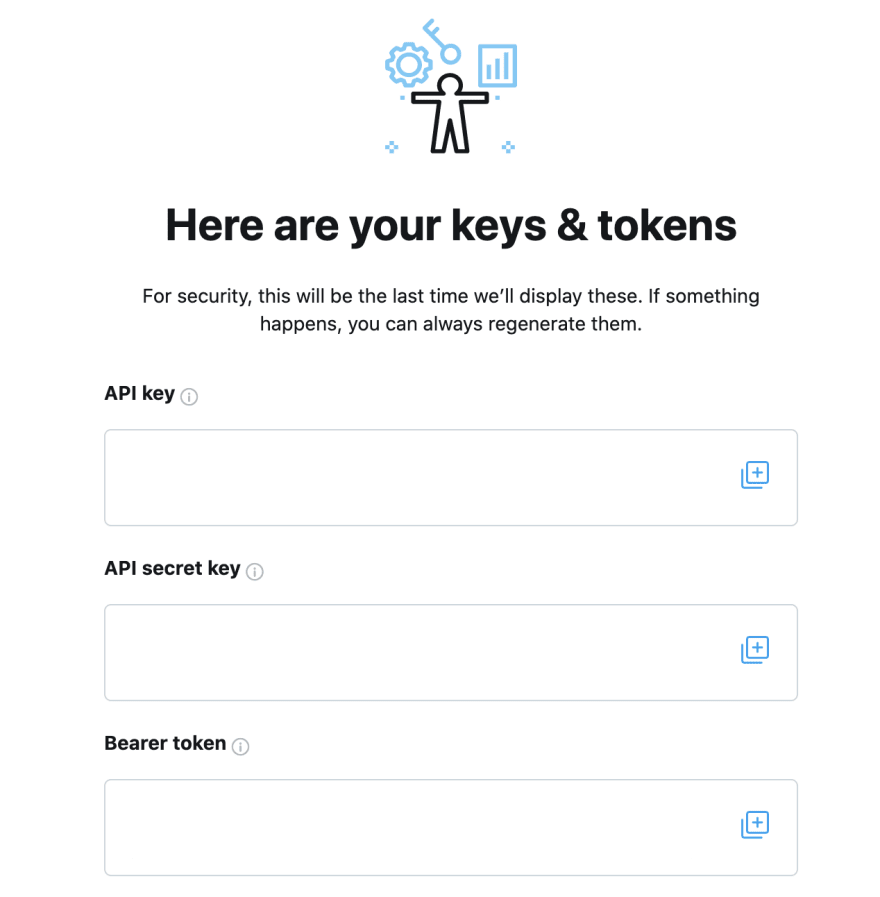

This will give you your API keys and Bearer Token that you can then use to connect to the full-archive search endpoint.

Please note

The keys in the screenshot above are hidden, but in your own developer portal, you will be able to see the actual values for the API Key, API Secret Key, and Bearer Token. Save these keys and the Bearer Token because you will need those for calling the full-archive search endpoint.

Connecting to the full-archive search endpoint

The cURL command below shows how you can get historical Tweets from @TwitterDev handle. Replace the $BEARER_TOKEN with your own Bearer Token, paste the full request in your terminal, and press "return".

curl --request GET 'https://api.twitter.com/2/tweets/search/all?query=from:twitterdev' --header 'Authorization: Bearer $BEARER_TOKEN'

You will see the response JSON.

By default, only the 10 most recent Tweets will be returned. If you want more than 10 Tweets per request, you can use the max_results parameter and set it to a maximum of 500 Tweets per request, as shown below:

curl --request GET 'https://api.twitter.com/2/tweets/search/all?query=from:twitterdev&max_results=500' --header 'Authorization: Bearer $BEARER_TOKEN'

Building queries

As you can see in the example calls above, using the query parameter, you can specify the data that you want to search for. As an example, if you wanted to get all Tweets that contain the word covid or the word coronavirus, you can use the OR operator within brackets, and your query can be (covid OR coronavirus) and thus your API call will look like the following:

curl --request GET 'https://api.twitter.com/2/tweets/search/all?query=(covid%20OR%20coronavirus)&max_results=500' --header 'Authorization: Bearer $BEARER_TOKEN'

Similarly, if you want all Tweets that contain the words covid19 that are not retweets, you can use the is:retweet operator with the logical NOT (represented by -), so your query can be covid19 -is:retweet and your API call will be:

curl --request GET 'https://api.twitter.com/2/tweets/search/all?query=covid19%20-is:retweet&max_results=500' --header 'Authorization: Bearer $BEARER_TOKEN'

Check out this guide for a complete list of operators that are supported in the full-archive search endpoint.

Using the start_time and end_time parameters to get historical Tweets

When using the full-archive search endpoint, by default Tweets from the last 30 days will be returned. If you want to get Tweets that are older than 30 days, you can use the start_time and end_time parameters in your API call. These parameters must be in a valid RFC3339 date-time format, for example 2020-12-21T13:00:00.00Z. Thus, if you want to get all Tweets from the Twitterdev account for the month of December 2020, your API call will be:

curl --request GET 'https://api.twitter.com/2/tweets/search/all?query=from:Twitterdev&start_time=2020-12-01T00:00:00.00Z&end_time=2021-01-01T00:00:00.00Z' --header 'Authorization: Bearer $BEARER_TOKEN'

Getting geo-tagged historical Tweets

Geo-tagged Tweets are Tweets that have geographic information associated with them such as city, state, country etc.

Using has:geo operator

If you want to get Tweets that have geo data, you can use the has:geo operator. For example, the following cURL request will get only those Tweets from the @TwitterDev handle that have geo data:

curl --request GET 'https://api.twitter.com/2/tweets/search/all?query=from:twitterdev%20has:geo' --header 'Authorization: Bearer $BEARER_TOKEN'

Using place_country operator

Similarly, you can limit Tweets that have geo data, to a specific country, using the place_country operator. The cURL command below will get all Tweets from the @TwitterDev handle from the United States:

curl --request GET 'https://api.twitter.com/2/tweets/search/all?query=from:twitterdev%20place_country:US' --header 'Authorization: Bearer XXXXX'

The country is specified above using the ISO alpha-2 character code. Valid ISO codes can be found here.

Getting more than 500 historical Tweets using the next_token

As mentioned above, by default you can only get up to 500 Tweets per request for a query to the full-archive search endpoint. If there are more than 500 Tweets available for your query, your json response will include a next_token which you can append to your API call in order to get the next available Tweets for this query. This next_token is available in the meta object of your JSON response, which looks something:

{

"newest_id": "12345678...",

"oldest_id": "12345678...",

"result_count": 500,

"next_token": "XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX"

}

Hence, to get the next available Tweets, use the next_token value from this meta object and use the value as the value for the next_token in your API call to the full-archive search endpoint as shown below (You will use your own Bearer Token and the value that you get for the Next Token for your previous API call).

curl --request GET 'https://api.twitter.com/2/tweets/search/all?max_results=500&query=covid&next_token=XXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXXX' --header 'Authorization: Bearer $BEARER_TOKEN'

This way, you can keep checking if a next_token is available and if you have not reached your desired number of Tweets to be collected, you can keep calling the full-archive endpoint with the new next_token for each request. Keep in mind that full-archive search endpoint counts towards your total Tweet cap, in other words the number of Tweets per month that you can get from the Twitter API per Project, so be mindful of your code logic when paging through the results in order to make sure you do not end up inadvertently exhausting your Tweet cap.

Below are some resources that can help you when using the full-archive search endpoint. We would love to hear your feedback. Reach out to us on @TwitterDev or on our community forums with questions about this endpoint.

Additional resources

Ready to build your solution?